Researchers have developed an integrated framework combining text mining and knowledge graph modeling to support biopharmaceutical process optimization. The system was designed to extract structured information from published literature and organize it into a queryable format relevant to process development and manufacturing.

Key process parameters in biopharmaceutical production – such as temperature, pH, dissolved oxygen, nutrient levels, and agitation rate – can affect yield, productivity, and product quality attributes. Although substantial data are available in the scientific literature, synthesizing findings across studies remains labor-intensive.

In the study, the researchers applied natural language processing techniques to peer-reviewed publications addressing bioprocess optimization. The system identified entities including cell lines, culture conditions, process variables, and reported outcomes. It then mapped relationships among these entities and incorporated them into a knowledge graph structure.

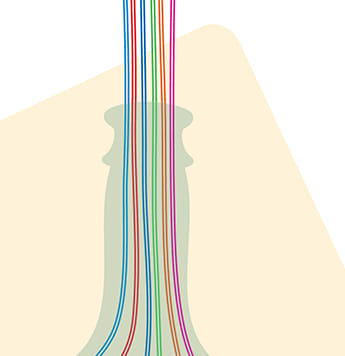

The resulting knowledge graph linked process parameters with outcomes such as protein expression levels, productivity, and quality attributes. Users could query the graph to explore associations and trace relationships back to their source publications. This structure enabled visualization of interconnected variables across multiple studies, rather than relying on isolated reports.

The authors demonstrated the framework using case examples in monoclonal antibody manufacturing. Queries identified reported associations between specific culture conditions and productivity measures, as well as links between purification strategies and quality attributes. Each extracted relationship remained connected to its original reference to support transparency.

Although knowledge graphs have been used in other biomedical domains, their application in biopharmaceutical process development has been limited. By integrating automated text extraction with graph-based modeling, the framework is intended to support literature review, hypothesis generation, and experimental planning.

The researchers noted that system performance depends on the consistency and completeness of published data. Variability in terminology across studies may affect entity recognition and relationship mapping. The framework does not replace experimental validation but is designed to assist with data organization and interpretation.

The study focused on development and validation of the informatics approach rather than direct manufacturing outcomes. Additional evaluation will be required to determine how such systems could be incorporated into routine process optimization workflows or regulatory documentation strategies.